Artificial intelligence is driving misinformation through deep fakes

With the proliferation of artificial intelligence (AI) adoption across the world, misinformation is on the rise especially through deep fakes. What's the way forward?

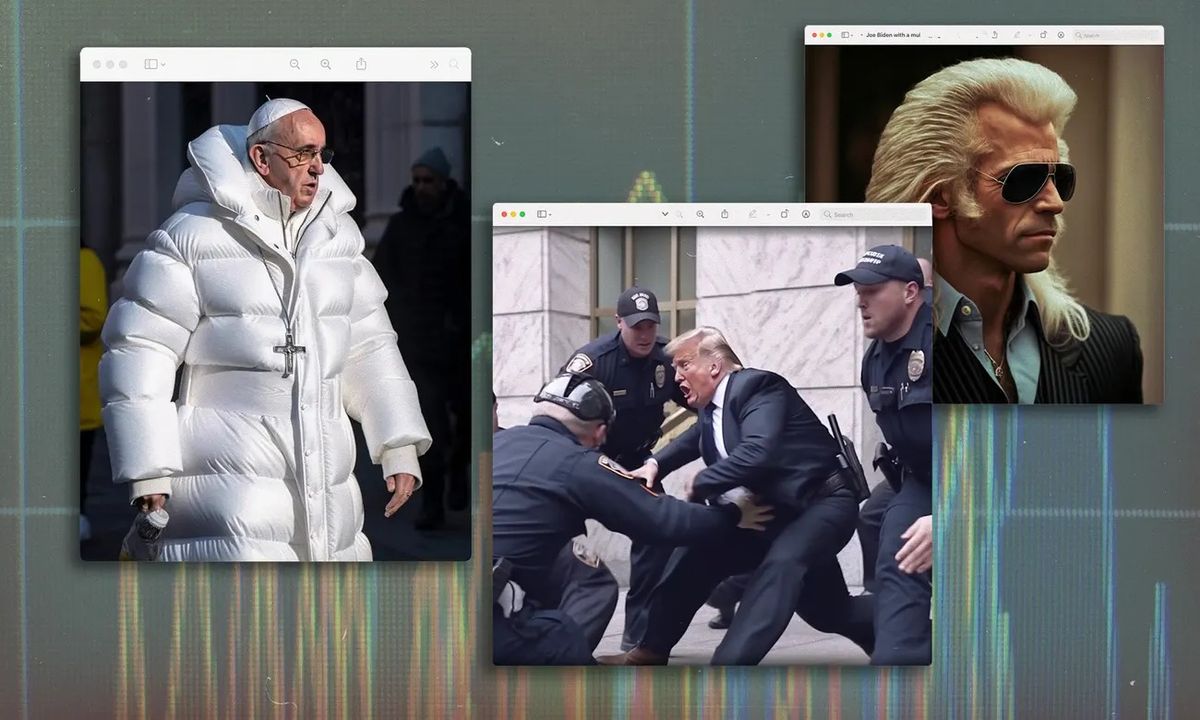

When the picture of Pope Francis in a white Balenciaga coat went viral last week, several people thought it was true.

The image looked real, and it was easy for me to know that the image was fake at first look [not because I am a journalist] but because I'm a Catholic and the Pope wears only one ring; it was more than that in the picture. A former BuzzFeed News reporter Ryan Broderick described it as "the first real mass-level AI misinformation case".

According to Pablo Xavier, the creator of the AI-generated image of the Pope, "I just thought it was funny to see the Pope in a funny jacket...I didn't want it to blow up like that". Xavier's image came only a few days after AI-generated images of Donald Trump being arrested by police in New York also went viral.

As big techs like Microsoft and Google are racing to capture the AI market, safety is likely going to be an afterthought. A few days ago, Microsoft laid off its entire ethics and society employees; the team that taught product managers and developers how to make AI tools responsibly.

Recall that earlier in January, Microsoft announced the third phase of its long-term partnership with OpenAI through a multiyear, multibillion-dollar investment to accelerate AI breakthroughs. The partnership is reportedly worth $100 billion. Microsoft has already incorporated GPT-3, DALL-E and other OpenAI technologies into its products. Most notably, GitHub, a popular online service for developers owned by Microsoft, offers Copilot, a tool that can automatically generate snippets of computer code.

As relevant as these tools might be, they will also drive misinformation if it is not properly regulated. After his AI-generated image of Pope Francis went viral, Xavier, realising the potential impact of the technology said, "It is going to get serious if they don’t start implementing laws to regulate it".

Dayo Isreal, the national youth leader of Nigeria's ruling All Progressive Congress, on Wednesday, March 28, tweeted that his car was burgled in Abuja, and he was robbed of his valuables. Most Nigerian Twitter users responded to his tweet with sarcasm but one of them was wrapped with a deep fake.

In her tweet, Sunflower [sarcastically] alleged that the image shared by Isreal was a fight scene in John Wick—a 2014 American action thriller film. She shared a doctored Google search page to back her allegations, while others understood it was sarcasm, several others believed the tweet which has garnered over 2.1 million views.

The APC youth leader had to share more evidence to debunk the now viral claim.

You’re a a big fat liar, Dayo. you took this image from a fight scene in John Wick 3

— Sunflower🌻 (@baby_girl_ola) March 29, 2023

Why are you painting Abuja bad with fake news fgs? Stop giving Abuja and Nigeria a bad image!! Nothing like this ever happened.

Fake news merchant!!! ❌❌❌ https://t.co/HSKFxs7GMM pic.twitter.com/qXdK03W8ol

Fact-checkers suggest how to tackle deep fakes

"AI will not only deepen misinformation, but it will also aid in spreading it," Adesola Ikulajolu, Managing Editor, ROUNDCHECK, a Nigerian youth-driven fact-checking platform, told Benjamindada.com. He described the proliferation of AI as a threat to the information ecosystem.

"Moderation is hard and we’ll be shipping improved systems soon," Midjourney CEO and founder David Holz, stated. "We are taking lots of feedback and ideas from experts and the community and are trying to be thoughtful".

During the just concluded Nigerian election "deep fakes [was] one of the tools that were deployed by political gladiators to sway supporters and play on their intelligence," Ikulajolu added.

"Among methods to spot deep fakes, journalists can review video content for glitches and distortions, apply existing verification and forensic techniques, and use AI-based approaches to spot deep fakes when available. An increase in media literacy tools and more training on manipulated media for journalists is also essential," Mya Zepp, a Disarming Disinformation Intern at the International Centre for Journalists, says.

Like Zepp, Ikulajolu also sees AI as a double-edged sword. "As much AI can be threatening to the information ecosystem in Africa, we can also leverage it to the good use of our daily activities," he said. "If AI can build deep fakes, AI should also build what can be used to spot such deep fakes."

Related Article: How to use ChatGPT to boost your productivity

Just like the tool we used earlier to detect the authenticity of the tweet, there are other tools like Sensity, Deepware Scanner, Microsoft Video Authenticator, DeepDetector & DeepfakeProof from DuckDuckGoose, Deepfake-o-Meter and Reality Defender, that will enable you to detect when a content is a deep fake.

Since some deep fakes are satires, it's important for individuals to also understand the conversations behind them. For instance, Sunflower's tweet was satire.

Generally, before you disseminate information use the SIFT method; "Stop, Investigate, Find better coverage, and Trace claims, quotes and media to the original context."

Comments ()